|

| home | articles | subscribe | submissions | editors | reviewers |

|

|

|

Citation Information

Fetterman, D. M. (1998, November 18). Empowerment evaluation and the Internet: A synergistic relationship. Current Issues in Education [On-line], 1(4). Available: http://cie.ed.asu.edu/volume1/number4/.

|

|

Empowerment Evaluation and the Internet: A Synergistic Relationship

David M. Fetterman

Stanford University

Abstract

Empowerment

evaluation (EE) is the use of evaluation concepts, techniques, and

findings to foster improvement and self-determination. It has captured

the attention and imagination of many evaluators throughout the world

for its fundamentally democratic process. A wide range of programs use

EE, including substance abuse prevention, indigent health care, welfare

reform, battered women's shelters, adolescent pregnancy prevention,

individuals with disabilities, doctoral programs, and accelerated

schools. Since its introduction in 1993 at the American Evaluation

Association, EE has also enjoyed rapid widespread acceptance and use as

a result of its appearance on the Internet. The Internet is unrivaled

as a mechanism for the distribution of new ideas and has greatly

facilitated the distribution of knowledge about the use of empowerment

evaluation because of its capacity to disseminate information in

nanoseconds and on a global scale. The synergistic relationship between

empowerment evaluation and the Internet offers a model for others as

they develop and refine their own approaches to evaluation. The

worldwide response to EE illustrates the strength and value arising

from the marriages of new ideas and new technology. This paper provides

a context in which to understand the synergistic link between the

approach and the Internet as a powerful dissemination tool. This rapid

distribution and acceptance of new ideas offers a model for colleagues

in any field.

|

Table of Contents

|

Introduction

Empowerment

evaluation is the use of evaluation concepts, techniques, and findings

to foster improvement and self-determination. It employs both

qualitative and quantitative methodologies. Although it can be applied

to individuals, organizations1

communities, and societies or cultures, the focus is usually on

programs. Empowerment evaluation is a part of the intellectual

landscape of evaluation. A wide range of programs use EE, including

substance abuse prevention, indigent health care, welfare reform,

battered women's shelters, adolescent pregnancy prevention, individuals

with disabilities, doctoral programs, and accelerated schools.

Descriptions of programs that use empowerment evaluation appear in Empowerment Evaluation: Knowledge and Tools for Self-Assessment and Accountability

(Fetterman, Kaftarian, & Wandersman, 1996). In addition, this

approach has been institutionalized within the American Evaluation

Association2

and is consistent with the spirit of the standards developed by the

Joint Committee on Standards for Educational Evaluation (Fetterman,

1995b; Joint Committee, 1994).3

The

Internet's ability to disseminate information quickly on a global scale

provides an invaluable communication tool for empowerment evaluation.

The Internet and related communication technologies (email, listserv,

videoconferencing, virtual classrooms, and communication centers) not

only aid dissemination of ideas regarding EE but resulting on-line

discussions can lead to refinement of the evaluation process itself.

Communication technologies can also be used to facilitate discussions

among evaluation participants and EE coaches by allowing ongoing

asynchronous and synchronous discussions without accompanying time

investments required by face-to-face meetings.

Empowerment

evaluation has become a world-wide phenomenon since its introduction in

1993 at the American Evaluation Association (Fetterman, 1994). EE's

acceptance is in part a function of timing. Evaluators were already

using forms of participatory self-assessment or were prepared to use it

because it represented the next logical step. Funders and clients were

focusing on program improvement and capacity building. A critical match

between people and common interests was made with an underlying and

often implicit commitment to fostering self-determination. The

wide-spread use of this evaluation approach, however, was also a result

of its appearance on the Internet. This rapid distribution and

acceptance of new ideas offers a model for colleagues in any field.

A

brief discussion about empowerment evaluation processes and outcomes,

intellectual roots or influences, and steps associated with EE provide

an insight into the approach. In addition, this brief introduction to

EE provides a context in which to understand the synergistic link

between the approach and the Internet as a powerful dissemination tool.

|

Empowerment Evaluation: Processes and Outcomes

Empowerment

evaluation is attentive to empowering processes and outcomes.

Zimmerman's work on empowerment theory provides the theoretical

framework for empowerment evaluation. According to Zimmerman (in press):

A

distinction between empowering processes and outcomes is critical in

order to clearly define empowerment theory. Empowerment processes are

ones in which attempts to gain control, obtain needed resources, and

critically understand one's social environment are fundamental. The

process is empowering if it helps people develop skills so they can

become independent problem solvers and decision makers. Empowering

processes will vary across levels of analysis. For example, empowering

processes for individuals might include organizational or community

involvement; empowering processes at the organizational level might

include shared leadership and decision making; and empowering processes

at the community level might include accessible government, media, and

other community resources.

Empowered outcomes refer to operationalization of

empowerment so we can study the consequences of citizen attempts to

gain greater control in their community or the effects of interventions

designed to empower participants. Empowered outcomes also differ across

levels of analysis. When we are concerned with individuals, outcomes

might include situation specific perceived control, skills, and

proactive behaviors. When we are studying organizations, outcomes might

include organizational networks, effective resource acquisition, and

policy leverage. When we are concerned with community level

empowerment, outcomes might include evidence of pluralism, the

existence of organizational coalitions, and accessible community

resources.

Empowerment evaluation has an unambiguous

value orientation--it is designed to help people help themselves and

improve their programs using a form of self-evaluation and reflection.

Program participants--including clients--conduct their own evaluations;

an outside evaluator often serves as a coach or additional facilitator

depending on internal program capabilities.

Zimmerman's (in

press) characterization of the community psychologist's role in

empowering activities is easily adapted to the empowerment evaluator:

An

empowerment approach to intervention design, implementation, and

evaluation redefines the professional's role relationship with the

target population. The professional's role becomes one of collaborator

and facilitator rather than expert and counselor. As collaborators,

professionals learn about the participants through their culture, their

world view, and their life struggles. The professional works with

participants instead of advocating for them. The professional's skills,

interest, or plans are not imposed on the community; rather,

professionals become a resource for a community. This role relationship

suggests that what professionals do will depend on the particular place

and people with whom they are working, rather than on the technologies

that are predetermined to be applied in all situations. While

interpersonal assessment and evaluation skills will be necessary, how,

where, and with whom they are applied cannot be automatically assumed

as in the role of a psychotherapist with clients in a clinic.

Empowerment evaluation also requires

sensitivity and adaptation to the local setting. It is not dependent

upon a predetermined set of technologies. Empowerment evaluation is

necessarily a collaborative group activity, not an individual pursuit.

An evaluator does not and cannot empower anyone; people empower

themselves, often with assistance and coaching. This process is

fundamentally democratic in the sense that it invites (if not demands)

participation, examining issues of concern to the entire community in

an open forum.

As a result, the context changes: the

assessment of a program's value and worth is not the endpoint of the

evaluation--as it often is in traditional evaluation--but is part of an

ongoing process of program improvement. This new context acknowledges a

simple but often overlooked truth: that merit and worth are not static

values. Populations shift, goals shift, knowledge about program

practices and their values change, and external forces are highly

unstable. By internalizing and institutionalizing self-evaluation

processes and practices, a dynamic and responsive approach to

evaluation can be developed to accommodate these shifts. Both value

assessments and corresponding plans for program improvement--developed

by the group with the assistance of a trained evaluator--are subject to

a cyclical process of reflection and self-evaluation. Program

participants learn to continually assess their progress toward

self-determined goals, and to reshape their plans and strategies

according to this assessment. In the process, self-determination is

fostered, illumination generated, and liberation actualized.

Value

assessments are also highly sensitive to the life cycle of the program

or organization. Goals and outcomes are geared toward the appropriate

developmental level of implementation. Extraordinary improvements are

not expected of a project that will not be fully implemented until the

following year. Similarly, seemingly small gains or improvements in

programs at an embryonic stage are recognized and appreciated in

relation to their stage of development. In a fully operational and

mature program, moderate improvements or declining outcomes are viewed

more critically.

|

Roots, Influences, and Comparisons

Empowerment evaluation has many sources. The idea first germinated during preparation of another book, Speaking the Language of Power: Communication, Collaboration, and Advocacy

(Fetterman, 1993). In developing that collection, I wanted to explore

the many ways that evaluators and social scientists could give voice to

the people they work with and bring their concerns to policybrokers. I

found that, increasingly, socially concerned scholars in myriad fields

are making their insights and findings available to decision makers.

These scholars and practitioners address a host of significant issues,

including conflict resolution, the high school student drop-out or

push-out problem, environmental health and safety, homelessness,

educational reform, AIDS, American Indian concerns, and the education

of gifted children. The aim of these scholars and practitioners was to

explore successful strategies, share lessons learned, and enhance their

ability to communicate with an educated citizenry and powerful

policy-making bodies. Collaboration, participation, and empowerment

emerged as common threads throughout the work and helped to crystallize

the concept of empowerment evaluation.

Empowerment

evaluation has roots in community psychology, action anthropology, and

action research. Community psychology focuses on people, organizations,

and communities working to establish control over their affairs. The

literature about citizen participation and community development is

extensive. Rappaport's (1987) "Terms of Empowerment/Exemplars of

Prevention: Toward a Theory for Community Psychology" is a classic in

this area. Tax's (1958) work in action anthropology focuses on how

anthropologists can facilitate the goals and objectives of

self-determining groups, such as Native American tribes. Empowerment

evaluation also derives from collaborative and participatory

evaluation. (For references on collaborative and participatory

evaluation see Choudhary & Tandon, 1988; Oja & Smulyan, 1989;

Papineau & Kiely, 1994; Reason, 1988; Shapiro, 1988; Stull &

Schensul, 1987; Whitmore, 1990; Whyte, 1990.)

Empowerment

evaluation has been strongly influenced by and is similar to action

research. Stakeholders typically control the study and conduct the work

in action research and empowerment evaluation. In addition,

practitioners empower themselves in both forms of inquiry and action.

Empowerment evaluation and action research are characterized by

concrete, timely, targeted, pragmatic orientations toward program

improvement. They both require cycles of reflection and action and

focus on the simplest data collection methods adequate to the task at

hand. However, there are conceptual and stylistic differences between

the approaches. For example, empowerment evaluation is explicitly

driven by the concept of self-determination. It is also explicitly

collaborative in nature. Action research, on the other hand, can be

either an individual effort documented in a journal or a group effort.

Written narratives are used to share findings with colleagues (Soffer,

1995). A group in a collaborative fashion conducts empowerment

evaluation, with a holistic focus on an entire program or agency.

Action research is often conducted on top of the normal daily

responsibilities of a practitioner. Empowerment evaluation is

internalized as part of the planning and management of a program. The

institutionalization of evaluation, in this manner, makes it more

likely to be sustainable rather than sporadic. In spite of these

differences, the overwhelming number of similarities between the

approaches has enriched empowerment evaluation.

Another

major influence on the development of EE was the national educational

school reform movement with colleagues such as Henry Levin, whose

Accelerated School Project (ASP) emphasizes the empowerment of parents,

teachers, and administrators to improve educational settings. We worked

to help design an appropriate evaluation plan for the Accelerated

School Project that contributes to the empowerment of teachers,

parents, students, and administrators (Fetterman & Haertel, 1990).

We also mapped out detailed strategies for districtwide adoption of the

project in an effort to help institutionalize the project in the school

system (Stanford University and American Institutes for Research, 1992).

Mithaug's

(1991, 1993) extensive work with individuals with disabilities to

explore concepts of self-regulation and self-determination provided

additional inspiration. We completed a 2-year Department of

Education-funded grant on self-determination and individuals with

disabilities. We conducted research designed to help both providers for

students with disabilities and the students themselves become more

empowered. We learned about self-determined behavior and attitudes and

environmentally related features of self-determination by listening to

self-determined children with disabilities and their providers. Using

specific concepts and behaviors extracted from these case studies, we

developed a behavioral checklist to assist providers as they work to

recognize and foster self-determination.

Self-determination,

defined as the ability to chart one's own course in life, forms the

theoretical foundation of empowerment evaluation. It consists of

numerous interconnected capabilities, such as the ability to identify

and express needs, establish goals or expectations and a plan of action

to achieve them, identify resources, make rational choices from various

alternative courses of action, take appropriate steps to pursue

objectives, evaluate short- and long-term results (including

reassessing plans and expectations and taking necessary detours), and

persist in the pursuit of those goals. A breakdown at any juncture of

this network of capabilities--as well as various environmental

factors--can reduce a person's likelihood of being self-determined.

(See also Bandura, 1982, concerning the self-efficacy mechanism in

human agency.)

A pragmatic influence on empowerment

evaluation is the W. K. Kellogg Foundation's emphasis on empowerment in

community settings. The foundation has taken a clear position

concerning empowerment as a funding strategy:

We've

long been convinced that problems can best be solved at the local level

by people who live with them on a daily basis. In other words,

individuals and groups of people must be empowered to become

changemakers and solve their own problems, through the organizations

and institutions they devise. . . . Through our community-based

programming, we are helping to empower various individuals, agencies,

institutions, and organizations to work together to identify problems

and to find quality, cost-effective solutions. In doing so, we find

ourselves working more than ever with grantees with whom we have been

less involved--smaller, newer organizations and their programs. (Transitions, 1992, p. 6).

The

W. K. Kellogg Foundation's work in the areas of youth, leadership,

community-based health services, higher education, food systems, rural

development, and families and neighborhoods exemplifies this spirit of

putting "power in the hands of creative and committed

individuals--power that will enable them to make important changes in

the world" (Transitions, 1992, p. 13). For example, Kellogg's

Empowering Farm Women to Reduce Hazards to Family Health and Safety on

the Farm--involves a participatory evaluation component. The work of

Sanders, Barley, and Jenness (1990) on cluster evaluations for the

Kellogg Foundation also highlights the value of giving ownership of the

evaluation to project directors and staff members of science education

projects.

These influences, activities, and experiences form

the background for this new evaluation approach. An eloquent literature

on empowerment theory by Zimmerman (in press); Zimmerman, Israel,

Schulz, and Checkoway (1992); Zimmerman and Rappaport (1988); and

Dunst, Trivette, and LaPointe (1992), as discussed earlier, also

informs this approach. A brief discussion about the pursuit of truth

and a review of empowerment evaluation's many facets and steps

illustrate its wide-ranging application.

|

Pursuit of Truth and Honesty

Empowerment

evaluation is guided by many principles. One of the most important

principles is a commitment to truth and honesty. This is not a naive

concept of one absolute truth, but a sincere intent to understand an

event in context and from multiple worldviews. The aim is to try and

understand what's going on in a situation from the participant's own

perspective as accurately and honestly as possible and then proceed to

improve it with meaningful goals and strategies and credible

documentation. There are many checks and balances in empowerment

evaluation, such as having a democratic approach to

participation--involving participants at all levels of the organization

and relying on external evaluators as critical friends.

Empowerment

evaluation is like a personnel performance self-appraisal. You come to

an agreement with your supervisor about your goals, strategies for

accomplishing those goals, and credible documentation to determine if

you are meeting your goals. The same agreement is made with your

clients. If the data are not credible, you lose your credibility

immediately. If the data merit it at the end of the year, you can use

it to advocate for yourself. Empowerment evaluation applies the same

approach to the program and community level. Advocacy, in this context,

becomes a natural byproduct of the self-evaluation process--if the data

merit it. Advocacy is meaningless in the absence of credible data. In

addition, external standards and/or requirements can significantly

influence any self-evaluation. To operate without consideration of

these external forces is to proceed at your own peril. However, the

process must be grounded in an authentic understanding and expression

of everyday life at the program or community level. A commitment to the

ideals of truth and honesty guides every facet and step of empowerment

evaluation.

|

Facets of Empowerment Evaluation

In

this new context, training, facilitation, advocacy, illumination, and

liberation are all facets--if not developmental stages--of empowerment

evaluation. Rather than additional roles for an evaluator whose primary

function is to assess worth (Scriven, 1967; Stufflebeam, 1994), these

facets are an integral part of the evaluation process. Cronbach's

developmental focus is relevant: the emphasis is on program

development, improvement, and lifelong learning.

Training. In

one facet of empowerment evaluation, evaluators teach people to conduct

their own evaluations and thus become more self-sufficient. This

approach desensitizes and demystifies evaluation and ideally helps

organizations internalize evaluation principles and practices, making

evaluation an integral part of program planning. Too often, an external

evaluation is an exercise in dependency rather than an empowering

experience. In these instances, the process ends when the evaluator

departs, leaving participants without the knowledge or experience to

continue for themselves. In contrast, an evaluation conducted by

program participants is designed to be ongoing and internalized in the

system, creating the opportunity for capacity building.

In

empowerment evaluation, training is used to map out the terrain,

highlighting categories and concerns. It is also used in making

preliminary assessments of program components, while illustrating the

need to establish goals, strategies to achieve goals, and documentation

to indicate or substantiate progress. Training a group to conduct a

self-evaluation can be considered equivalent to developing an

evaluation or research design (as that is the core of the training), a

standard part of any evaluation. This training is ongoing, as new

skills are needed to respond to new levels of understanding. Training

also becomes part of the self-reflective process of self-assessment (on

a program level) in that participants must learn to recognize when more

tools are required to continue and enhance the evaluation process. This

self-assessment process is pervasive in an empowerment

evaluation--built into every part of a program, even to the point of

reflecting on how its own meetings are conducted and feeding that input

into future practice.

In essence, empowerment evaluation is

the "give someone a fish and you feed her for a day; teach her to fish,

and she will feed herself for the rest of her life" concept, as applied

to evaluation. The primary difference is that in empowerment evaluation

the evaluator and the individuals benefiting from the evaluation are

typically on an even plane, learning from each other.

Facilitation.

Empowerment evaluators serve as coaches or facilitators to help others

conduct a self-evaluation. In my role as a coach, I provide general

guidance and direction to the effort, attending sessions to monitor and

facilitate as needed. It is critical to emphasize that the staff are in

charge of their effort; otherwise, program participants initially tend

to look to the empowerment evaluator as expert, which makes them

dependent on an outside agent. In some instances, my task is to clear

away obstacles and identify and clarify miscommunication patterns. I

also participate in many meetings along with internal empowerment

evaluators, providing explanations, suggestions, and advice at various

junctures to help ensure that the process has a fair chance.

An

empowerment evaluation coach can also provide useful information about

how to create facilitation teams (balancing analytical and social

skills), work with resistant (but interested) units, develop refresher

sessions to energize tired units, and resolve various protocol issues.

Simple suggestions along these lines can keep an effort from backfiring

or being seriously derailed. A coach may also be asked to help create

the evaluation design with minimal additional support.

Whatever

her contribution, the empowerment evaluation coach must ensure that the

evaluation remains in the hands of program personnel. The coach's task

is to provide useful information, based on her evaluator's training and

past experience, to keep the effort on course.

Advocacy.

A common workplace practice provides a familiar illustration of

self-evaluation and its link to advocacy on an individual level.

Employees often collaborate with both supervisor and clients to

establish goals, strategies for achieving those goals and documenting

progress, and realistic time lines. Employees collect data on their own

performance and present their case for their performance appraisal.

Self-evaluation thus becomes a tool of advocacy--using credible

documentation of performance to advocate for oneself in terms of a

raise or promotion. This individual self-evaluation process is easily

transferable to the group or program level.

Illumination.

Illumination is an eye-opening, revealing, and enlightening experience.

Typically, a new insight or understanding about roles, structures, and

program dynamics is developed in the process of determining worth and

striving for program improvement (Parlett & Hamilton, 1976).

Empowerment evaluation is illuminating on a number of levels. For

example, on an individual level, an administrator in one empowerment

evaluation, with little or no research background, developed a

testable, researchable hypothesis in the middle of a discussion about

indicators and self-evaluation. It was not only illuminating to her but

to the group as well; it revealed what they could do as a group when

given the opportunity to think about problems and come up with workable

options, hypotheses, and tests. This experience of illumination holds

the same intellectual intoxication each of us experienced the first

time we came up with a researchable question. The process creates a

dynamic community of learners as people engage in the art and science

of evaluating themselves.

Liberation.

Illumination often sets the stage for liberation. EE can unleash

powerful, emancipatory forces for self-determination. Liberation is the

act of being freed or freeing oneself from preexisting roles and

constraints. It often involves new conceptualizations of oneself and

others. Many of the examples in this discussion demonstrate how helping

individuals take charge of their lives--and find useful ways to

evaluate themselves--liberates them from traditional expectations and

roles. They also demonstrate how empowerment evaluation enables

participants to find new opportunities, see existing resources in a new

light, and redefine their identities and future roles.

|

Steps of Empowerment Evaluation

There

are several pragmatic steps involved in helping others learn to

evaluate their own programs: (a) taking stock or determining where the

program stands, including strengths and weaknesses; (b) focusing on

establishing goals-determining where you want to go in the future with

an explicit emphasis on program improvement; (c) developing strategies

and helping participants determine their own strategies to accomplish

program goals and objectives; and (d) helping program participants

determine the type of evidence required to document progress credibly

toward their goals.

Taking Stock. One of

the first steps in empowerment evaluation is taking stock (step 1).

Program participants are asked to rate their program on a 1 to 10

scale, with 10 being the highest level.4

They are asked to make the rating as accurate as possible. Many

participants find it less threatening or overwhelming to begin by

listing, describing, and then rating individual activities in their

program, before attempting a gestalt or overall unit rating. Specific

program activities might include recruitment, admissions, pedagogy,

curriculum, graduation, and alumni tracking in a school setting. The

potential list of components to rate is endless, and each participant

must prioritize the list of items--typically limiting the rating to the

top 10 activities. Program participants are also asked to document

their ratings (both the ratings of specific program components and the

overall program rating). Typically, some participants give their

programs an unrealistically high rating. Peer ratings, a reminder about

the realities of their environment--such as a high dropout rate,

students bringing guns to school, and racial violence in a high school,

and a critique of their documentation--help participants recalibrate

their rating. In some cases, however, ratings stay higher than peers

consider appropriate. The significance of this process, however, is not

the actual rating so much as it is the creation of a baseline from

which future progress can be measured. In addition, it sensitizes

program participants to the necessity of collecting data to support

assessments or appraisals.

Setting Goals.

After rating their program's performance and providing documentation to

support that rating, program participants are asked how highly they

would like to rate their program in the future (step 2). Then they are

asked what goals they want to set to warrant that future rating. These

goals should be established in conjunction with supervisors and clients

to ensure relevance from both perspectives. In addition, goals should

be realistic, taking into consideration such factors as initial

conditions, motivation, resources, and program dynamics.

It

is important that goals be related to the program's activities,

talents, resources, and scope of capability. One problem with

traditional external evaluation is that programs have been given

grandiose goals or long-term goals that participants could only

contribute to in some indirect manner. There was no link between their

daily activities and ultimate long-term program outcomes in terms of

these goals. In empowerment evaluation, program participants are

encouraged to select intermediate goals that are directly linked to

their daily activities. These activities can then be linked to larger,

more diffuse goals, creating a clear chain of outcomes.

Program

participants are encouraged to be creative in establishing their goals.

A brainstorming approach is often used to generate a new set of goals.

Individuals are asked to state what they think the program should be

doing. The list generated from this activity is refined, reduced, and

made realistic after the brainstorming phase, through a critical review

and consensual agreement process.

There are also a

bewildering number of goals to strive for at any given time. As a group

begins to establish goals based on this initial review of their

program, they realize quickly that a consensus is required to determine

the most significant issues to focus on. These are chosen according to

significance to the operation of the program, timing or urgency, and/or

program vision or unifying purpose. For example, teaching is typically

one of the most important components of a school and there are few

things as urgent as recruitment and budget pressures for schools.

Goal

setting can be a slow process when program participants have a heavy

work schedule. Sensitivity to the pacing of this effort is essential.

Additional tasks of any kind and for any purpose may be perceived as

simply another burden when everyone is fighting to keep their heads

above water.

Developing Strategies.

Program participants are also responsible for selecting and developing

strategies to accomplish program objectives (step 3). The same process

of brainstorming, critical review, and consensual agreement is used to

establish a set of strategies. These strategies are routinely reviewed

to determine their effectiveness and appropriateness. Determining

appropriate strategies, in consultation with sponsors and clients, is

an essential part of the empowering process. Program participants are

typically the most knowledgeable about their own jobs, and this

approach acknowledges and uses that expertise--and in the process puts

them back in the "driver's seat."

Documenting Progress.

Program participants are asked what type of documentation is required

to monitor progress toward their goals (step 4). This is a critical

step. Each form of documentation is scrutinized for relevance to avoid

devoting time to collecting information that will not be useful or

relevant. Program participants are asked to explain how a given form of

documentation is related to specific program goals. This review process

is difficult and time-consuming but prevents wasted time and

disillusionment at the end of the process. In addition, documentation

must be credible and rigorous if it is to withstand the criticism that

this evaluation is self-serving. (See Fetterman, 1994, for a detailed

discussion of these steps and case examples.)

|

Dynamic Community of Learners

Many

elements must be in place for empowerment evaluation to be effective

and credible. Participants must have the latitude to experiment, taking

both risks and responsibility for their actions. An environment

conducive to sharing successes and failures is also essential. In

addition, an honest, self-critical, trusting, and supportive atmosphere

is required. Conditions need not be perfect to initiate this process.

However, the accuracy and usefulness of self-ratings improve

dramatically in this context. An outside evaluator who is charged with

monitoring the process can help keep the effort credible, useful, and

on track, providing additional rigor, reality checks, and quality

controls throughout the evaluation. Without any of these elements in

place, the exercise may be of limited utility and potentially

self-serving. With many of these elements in place, the exercise can

create a dynamic community of transformative learning.

|

The Institute: A Case Example

The

California Institute of Integral Studies is an independent graduate

school located in San Francisco. It has been accredited since 1981 by

the Commission for Senior Colleges and Universities of Schools and

Colleges (WASC). The accreditation process requires periodic

self-evaluations. The institute adopted an empowerment evaluation

approach as a tool to institutionalize evaluation as part of the

planning and management of operations and to respond to the

accreditation self-study requirement. Institute faculty, staff members,

and students used empowerment evaluation to assess the merit of a group

(academic or business unit) and establish goals and strategies to

improve program practice. Establishing goals was part of their

strategic planning and was built on the foundation of program

evaluation.

Workshops were conducted throughout the

institute to provide training in evaluation techniques and procedures.

All academic, governance, and administrative unit heads attended the

training sessions held over three days. They served as facilitators in

their own groups. Training and individual technical assistance were

also provided throughout the year for governance and other

administrative groups, including the Office of the President and the

Development Office. (See Appendix A for additional detail.)

The

self-evaluation process required thoughtful reflection and inquiry. The

groups or units described their purpose and listed approximately ten

key unit activities that characterized their unit. Members of the unit

democratically determined the top ten activities that merit

consideration and evaluation. Then each member of a unit evaluated each

activity by rating the activities on a 1 to 10 scale. Individual

ratings are combined to produce a group or unit rating for each

activity and one for the total unit. Unit members review these ratings.

A sample matrix is provided below to illustrate how this process was

implemented.

STL Wide Self-Evaluation Worksheet

(Participant initials are listed at the top of each column)

| Activities |

EK |

KK |

YT |

DE |

BH |

MT |

EG |

DF |

JA |

MC |

CJ |

PPV |

CLS |

GJ |

Subtotal |

Average |

| Building Capacity |

7 |

5 |

7 |

5 |

5 |

7 |

5 |

6 |

7 |

8 |

8 |

6 |

7 |

6 |

89 |

6.36 |

| Teaching |

5 |

6 |

8 |

8 |

6 |

8 |

8 |

8 |

8 |

9 |

8 |

6 |

9 |

na |

97 |

7.46 |

| Research (activist) |

5 |

7 |

7 |

3 |

6 |

6 |

3 |

8 |

7 |

6 |

4 |

5 |

5 |

na |

72 |

5.54 |

| Attract Students |

4 |

5 |

6 |

6 |

7 |

7 |

8 |

6 |

7 |

7 |

7 |

4 |

6 |

6 |

86 |

6.14 |

| Attract Staff/Fac |

4 |

5 |

9 |

6 |

7 |

7 |

6 |

6 |

6 |

5 |

8 |

7 |

6 |

7 |

89 |

6.36 |

| Retain Students |

7 |

5 |

7 |

8 |

7 |

6 |

5 |

6 |

6 |

5 |

8 |

4 |

6 |

8 |

88 |

6.29 |

| Retain Staff/Fac |

4 |

6 |

7 |

6 |

5 |

6 |

5 |

6 |

6 |

6 |

7 |

4 |

6 |

8 |

82 |

5.86 |

| Transformative Learning |

8 |

6 |

6 |

5 |

5 |

7 |

9 |

7 |

7 |

8 |

8 |

8 |

6 |

7 |

97 |

6.93 |

| Infrastructure STL |

5 |

6 |

8 |

6 |

7 |

8 |

8 |

6 |

8 |

5 |

7 |

5 |

6 |

6 |

91 |

6.50 |

| Infrastructure STL/CIIS |

5 |

4 |

8 |

6 |

5 |

8 |

8 |

6 |

6 |

5 |

6 |

4 |

6 |

4 |

81 |

5.79 |

| Dissemination - scholarly |

3 |

6 |

7 |

4 |

6 |

6 |

5 |

8 |

5 |

5 |

6 |

5 |

5 |

8 |

79 |

5.64 |

| Enhance health relationship CIIS |

7 |

6 |

8 |

6 |

6 |

8 |

5 |

7 |

5 |

7 |

8 |

4 |

7 |

5 |

89 |

6.36 |

| Community Building |

6 |

6 |

7 |

6 |

7 |

7 |

6 |

6 |

6 |

8 |

8 |

4 |

5 |

6 |

88 |

6.29 |

| Curriculum dev/refin/eval |

8 |

6 |

7 |

7 |

7 |

7 |

8 |

8 |

8 |

8 |

8 |

7 |

6 |

7 |

102 |

7.29 |

| Experimental pedagogy |

8 |

6 |

9 |

8 |

8 |

8 |

8 |

8 |

8 |

9 |

8 |

7 |

8 |

7 |

110 |

7.86 |

| Diversity |

5 |

4 |

8 |

6 |

5 |

7 |

5 |

4 |

4 |

6 |

8 |

4 |

6 |

6 |

78 |

5.57 |

| Subtotal |

91 |

89 |

119 |

96 |

99 |

113 |

102 |

106 |

104 |

107 |

117 |

84 |

100 |

91 |

1,418 |

102.21 |

| Average(activity) |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

89 |

6.39 |

| Average(person) |

5.69 |

5.56 |

7.44 |

6.00 |

6.19 |

7.06 |

6.38 |

6.63 |

6.50 |

6.69 |

7.31 |

5.25 |

6.25 |

6.50 |

|

6.39 |

| Unit Average |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

6.39 |

|

Unit

members discuss and dissect the meaning of the activities listed in the

matrix and the ratings given to each activity. This exchange provides

unit members with an opportunity to establish norms concerning the

meaning of terms and ratings in an open and collegial atmosphere. Unit

members are also required to provide evidence or documentation for each

rating and/or to identify areas in which additional documentation is

needed. These self-evaluations represent the first baseline data about

program and unit operations concerning the entire institute. This

process is superior to survey methods, for example, for three reasons:

(a) unit members determine what to focus on to improve their own

programs -- improving the validity of the effort and the buy-in

required to implement recommendations; (b) all members of the community

are immersed in the evaluation experience, making the process of

building a culture of evidence and a community of learners as important

as the specific evaluative outcomes; and (c) there is a 100% return

rate (as compared with typically low return rates for surveys).

These

self-evaluations have already been used to implement specific

improvements in program practice. This process has been used to place

old problems in a new light, leading to solutions, adaptations, and new

activities for the future. It has also been used to reframe existing

data from traditional sources, enabling participants to give meaningful

interpretation to data they have already collected. In addition,

self-evaluations have been used to ensure programmatic and academic

accountability. For example, the psychology program decided to

discontinue part of its program as part of the self-evaluation process.

This was a significant WASC (accreditation agency) and institute

concern of long standing. The core of the problem was that there were

not enough faculty in the program to properly serve their students. The

empowerment evaluation process provided a vehicle for the faculty to

come to terms with this problem in an open, self-conscious manner. They

were dedicated to serving students properly but when they finally sat

down and analyzed faculty-student ratios and faculty dissertation loads

the problem became self-evident. (The faculty had complained about the

work load and working conditions before but they had never consciously

analyzed, diagnosed, and documented this problem because they did not

have the time or a simple, nonthreatening mechanism to assess

themselves.) Empowerment evaluation provided institute faculty with a

tool to evaluate the program in light of scarce resources and make an

executive decision to discontinue the program. Similarly, the all

on-line segment of one of the institute's Ph.D. programs has been

administratively merged with a distance learning component of the same

program as a result of this self-evaluative process. This was done to

provide greater efficiencies of scale, improved monitoring and

supervision, and more face-to-face contact with the institute. (See

Fetterman, 1996a, and 1996b, for a description of one of these on-line

educational programs.)

The institute also used empowerment

evaluation to build plans for the future based on these evaluations.

All units at the institute completed their plans for the future (a 100%

return rate), and these data were used to design an overall strategic

plan. This process ensures community involvement and commitment to the

effort, generating a plan that is grounded in the reality of unit

practice. The provost has institutionalized this process by requiring

self-evaluations and unit plans on an annual basis to facilitate

program improvement and contribute to institutional accountability.

All

groups in the institute--including academic, governance, and

administrative units--have conducted both the self-evaluations and

their plans for the future. The purpose of these efforts is to improve

operations and build a base for planning and decision making. In

addition to focusing on improvement, these self-evaluations and plans

for the future contribute to institutional accountability.

|

Caveats and Concerns

Is Research Rigor Maintained? This

case study presented a picture of how research and evaluation rigor is

maintained. Mechanism employed to maintain rigor included: workshops

and training, democratic participation in the evaluation (ensuring that

majority and minority views are represented), quantifiable rating

matrices to create a baseline to measure progress, discussion and

definition of terms and ratings (norming), scrutinizing documentation,

and questioning findings and recommendations. These mechanisms help

ensure that program participants are critical, analytical, and honest.

Adaptations, rather than significant compromises, are required to

maintain the rigor required to conduct these evaluations. Although I am

a major proponent of individuals taking evaluation into their own hands

and conducting self-evaluations, I recognize the need for adequate

research, preparation, and planning. These first discussions need to be

supplemented with reports, texts, workshops, classroom instruction, and

apprenticeship experiences if possible. Program personnel new to

evaluation should seek the assistance of an evaluator to act as coach,

assisting in the design and execution of an evaluation. Further, an

evaluator must be judicious in determining when it is appropriate to

function as an empowerment evaluator or in any other evaluative role.

Does This Abolish Traditional Evaluation? New

approaches, such as EE, require a balanced assessment. A strict

constructionist perspective may strangle a young enterprise; too

liberal a stance is certain to transform a novel tool into another fad.

Colleagues who fear that we are giving evaluation away are right in one

respect--we are sharing it with a much broader population. But those

who fear that we are educating ourselves out of a job are only

partially correct. Like any tool, empowerment evaluation is designed to

address a specific evaluative need. It is not a substitute for other

forms of evaluative inquiry or appraisal. We are educating others to

manage their own affairs in areas they know (or should know) better

than we do. At the same time, we are creating new roles for evaluators

to help others help themselves.

How Objective Can a Self-Evaluation Be? Objectivity

is a relevant concern. We need not belabor the obvious point that

science and specifically evaluation have never been neutral. Anyone who

has had to roll up her sleeves and get her hands dirty in program

evaluation or policy arenas is aware that evaluation, like any other

dimension of life, is political, social, cultural, and economic. It

rarely produces a single truth or conclusion. In the context of a

discussion about self-referent evaluation, Stufflebeam (1994) states,

As

a practical example of this, in the coming years U.S. teachers will

have the opportunity to have their competence and effectiveness

examined against the standards of the National Board for Professional

Teaching Standards and if they pass to become nationally certified. (p.

331)

Regardless of one's position on this issue,

evaluation in this context is a political act. What Stufflebeam

considers an opportunity, some teachers consider a threat to their

livelihood, status, and role in the community. This can be a screening

device in which social class, race, and ethnicity are significant

variables. The goal is "improvement," but the questions of for whom and

at what price remain valid. Evaluation in this context or any other is

not neutral--it is for one group a force of social change, for another

a tool to reinforce the status quo.

Greene (1997) explained

that "social program evaluators are inevitably on somebody's side and

not on somebody else's side. The sides chosen by evaluators are most

importantly expressed in whose questions are addressed and, therefore,

what criteria are used to make judgments about program quality" (p.

25). She points out how Campbell's work (1971) focuses on policy

makers, Patton's (1997) on onsite program administrators and board

members, Stake's (1995) on onsite program directors and staff, and

Scriven's (1993) on the needs of program consumers. These are not

neutral positions; they are, in fact, positions of de facto advocacy

based on the stakeholder focal point in the evaluation.

According

to Stufflebeam (1994) "Objectivist evaluations are based on the theory

that moral good is objective and independent of personal or merely

human feelings. They are firmly grounded in ethical principles,

strictly control bias or prejudice in seeking determinations of merit

and worth..." To assume that evaluation is all in the name of science

or that it is separate, above politics, or "mere human

feelings"--indeed, that evaluation is objective--is to deceive oneself

and to do an injustice to others. Objectivity functions along a

continuum--it is not an absolute or dichotomous condition of all or

none. Fortunately, such objectivity is not essential to being critical.

For example, I support programs designed to help dropouts pursue their

education and prepare for a career; however, I am highly critical of

program implementation efforts. If students are not attracted to the

program, or the program is operating poorly, it is doing a disservice

both to former dropouts and to taxpayers.

One needs only to

scratch the surface of the "objective" world to see that values,

interpretations, and culture shape it. Whose ethical principles are

evaluators grounded in? Do we all come from the same cultural,

religious, or even academic tradition? Such an ethnocentric assumption

or assertion flies in the face of our accumulated knowledge about

social systems and evaluation. Similarly, assuming that we can

"strictly control bias or prejudice" is naive, given the wealth of

literature available on the subject, ranging from discussions about

cultural interpretation to reactivity in experimental design.

What About Participant or Program Bias? The

process of conducting an empowerment evaluation requires the

appropriate involvement of stakeholders. The entire group--not a single

individual, not the external evaluator or an internal manager--is

responsible for conducting the evaluation. The group thus can serve as

a check on individual members, moderating their various biases and

agendas. No individual operates in a vacuum. Everyone is accountable in

one fashion or another and thus has an interest or agenda to protect. A

school district may have a 5-year plan designed by the superintendent;

a graduate school may have to satisfy requirements of an accreditation

association; an outside evaluator may have an important but demanding

sponsor pushing either time lines or results, or may be influenced by

training to use one theoretical approach rather than another.

In

a sense, empowerment evaluation minimizes the effect of these biases by

making them an explicit part of the process. The example of a

self-evaluation in a performance appraisal is useful again here. An

employee negotiates with his or her supervisor about job goals,

strategies for accomplishing them, documentation of progress, and even

the time line. In turn, the employee works with clients to come to an

agreement about acceptable goals, strategies, documentation, and time

lines. All of this activity takes place within corporate,

institutional, and/or community goals, objectives, and aspirations. The

larger context, like theory, provides a lens in which to design a

self-evaluation. Self-serving forms of documentation do not easily

persuade supervisors and clients. Once an employee loses credibility

with a supervisor, it is difficult to regain it. The employee thus has

a vested interest in providing authentic and credible documentation.

Credible data (as agreed on by supervisor and client in negotiation

with the employee) serve both the employee and the supervisor during

the performance appraisal process.

Applying this approach to

the program or community level, superintendents, accreditation

agencies, and other "clients" require credible data. Participants in an

empowerment evaluation thus negotiate goals, strategies, documentation,

and time lines. Credible data can be used to advocate for program

expansion, redesign, and/or improvement. This process is an open one,

placing a check on self-serving reports. It provides an infrastructure

and network to combat institutional injustices. It is a highly (often

brutally) self-critical process. Program staff members and participants

are typically more critical of their own program than an external

evaluator, often because they are more familiar with their program and

would like to see it serve its purpose(s) more effectively.5

Empowerment evaluation is successful because it adapts and responds to

existing decision-making and authority structures on their own terms

(Fetterman, 1993). It also provides an opportunity and a forum to

challenge authority and managerial facades by providing data about

actual program operations--from the ground up.

Empowerment

evaluation is particularly valuable for disenfranchised people and

programs to ensure that their voices are heard and that real problems

are addressed. However, this kind of transformation in the field of

evaluation does not happen by itself. It requires hard work, a series

of successful efforts, and tools to communicate what works and what

does not work. The Internet has been an invaluable dissemination model

in this regard.

|

Internet and Related Technologies: An Effective Dissemination Model

Internet

The

Internet has greatly facilitated the distribution of knowledge about

and the use of empowerment evaluation. Home pages, listservs, virtual

classrooms and conferences, videoconferencing on the net, and

publishing on the net represent powerful tools allowing one to

communicate with large numbers of people, respond to inquiries in a

timely fashion, disseminate knowledge, reach isolated populations

around the globe, and create a global community of learners. This brief

discussion highlights the value of the Internet to facilitate

communication about and understanding of empowerment evaluation. (All

the web locations discussed in this article can be found at http://www.stanford.edu/~davidf and http://www.stanford.edu/~davidf/webresources.html.)

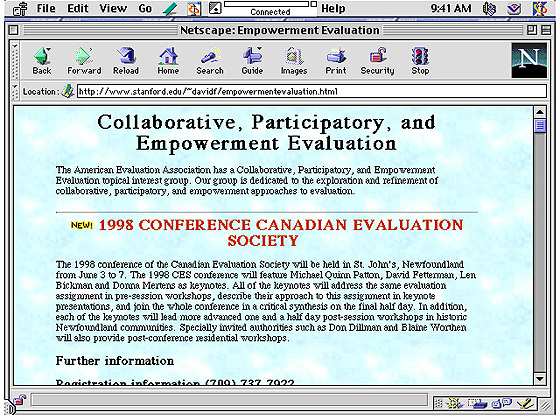

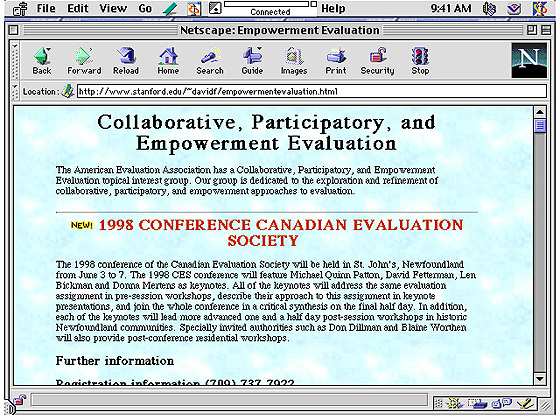

Home Pages

The

Collaborative, Participatory, and Empowerment Evaluation home page has

been a useful mechanism with which to share information about specific

projects, professional association activity, book reviews, and related

literature. In the spirit of self-reflection and critique, positive and

negative reviews of empowerment evaluation are posted on the page

(Brown, 1997; Patton, 1998; Scriven, 1998; Sechrest, 1997; Wild, 1997).

The Collaborative, Participatory, and Empowerment Evaluation topical

interest group's electronic newsletter is also accessible through this

home page. The home page has been a useful networking tool as well,

providing names, e-mail and snail mail addresses, telephone numbers,

and faxes. It provides links to related participatory evaluation sites,

such as the Harvard Evaluation Exchange and the Health Promotion

Internet Lounge's Measurement of Community Empowerment site, free

software, InnoNet's Web Evaluation Toolbox (a web based self-evaluation

program), and other web-based evaluation tools to help colleagues

conduct their own evaluations (http://http://www.stanford.edu/~davidf/empowermentevaluation.html).

This

is a computer screen snapshot of the American Evaluation Association's

Collaborative, Participatory, and Empowerment Evaluation home page,

highlighting the Canadian Evaluation Society's Conference.

Listservs

Listservs

are lists of e-mail addresses of subscribers with a common interest.

Members of the listserv who send messages to the listserv's e-mail

address communicate with every listserv subscriber within nanoseconds.

Listservs thus provide a simple way to communicate rapidly and

inexpensively with a large number of people. The EE listserv also

periodically posts employment opportunities and is used to discuss

various issues, such as the difference between collaborative,

participatory, and empowerment evaluation. Both seasoned colleagues and

doctoral students have posted problems or questions and received prompt

and generous advice and support.

Listservs do have some

limitations: Periodically they can inundate users with marginally

relevant discussions. In addition, a misconfigured server can generate

an endless loop of postings, potentially bringing down an entire

system. This is a rare occurrence, but one that did bring down an

earlier empowerment evaluation listserv that had to be replaced.

Another problem is that information is hard to track and follow after

the immediate discussion because it is not organized or stored

according to topic.

Virtual Classrooms and Conference Centers

Virtual

classrooms and conference centers allow members to post messages in

folders in contrast with a listserv's stream of emails, which are

typically comingled with other unrelated e-mail messages. Folders are

labeled by topic, attracting colleagues with similar interests,

concerns, and questions to the same location on the web. The benefit of

a virtual classroom or conference center over a listserv is that it

provides the reader with a "thread" of conversation. Although comments

are posted asynchronously, the postings or material read like a

conversation. Colleagues have time to think before responding, consult

a colleague or a journal, compose their thoughts, and then post a

response to an inquiry. In addition, virtual classrooms and conference

centers, similar to listservs, enable evaluators to communicate

according to their own schedules and in dispersed settings (see

Fetterman, 1996a, for additional detail).

The virtual

classroom or conference center has also been an ideal medium in which

to work with program participants in remote areas that restrict them.

For example, a group of eighth grade teachers initially sent an email

requesting assistance to conduct an empowerment evaluation of their

program in Washington state. It was not possible for us to travel at

the time or to extract ourselves from our daily obligations. Instead,

we used the virtual classroom and conference center to interact with

the teachers.

The teachers posted their mission and listed

critical activities associated with their eighth grade program in the

virtual classroom or conference center. My colleagues and I then

commented on their postings and coached them as they moved from step to

step. The virtual classroom and conference center also has a virtual

chat section allowing spontaneous and synchronous communication. This

feature more closely approximates typical face-to-face interaction

because it is "conversation or chatting" (by typing messages back and

forth) in real time. However, real-time exchanges limit the users'

flexibility in responding, since they have to be sitting at the

computer at the same time as their colleagues to communicate. The

advantage of asynchronous communication, such as e-mail and the virtual

classroom, is that participants do not have to be communicating at the

same time; instead they can respond to each other according to their

own schedules and time zones. (See http://www.stanford.edu/~davidf/virtual.html for a virtual classroom demonstration.)

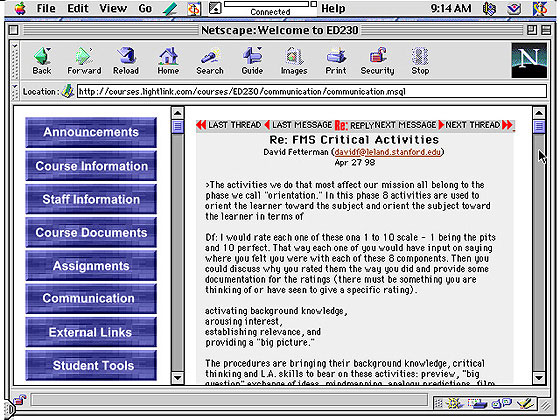

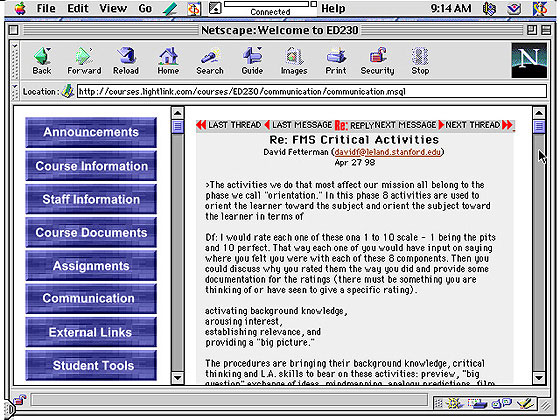

This

is a computer screen snapshot of eighth grade teachers from the state

of Washington posting some of their activities in the virtual

classroom, along with our critique from Stanford, California.

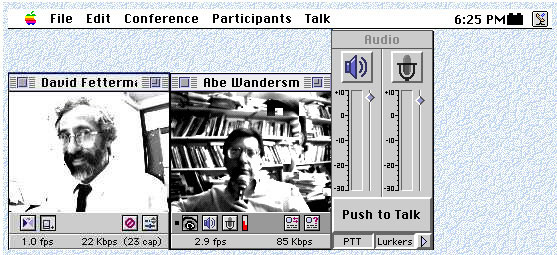

Videoconferencing

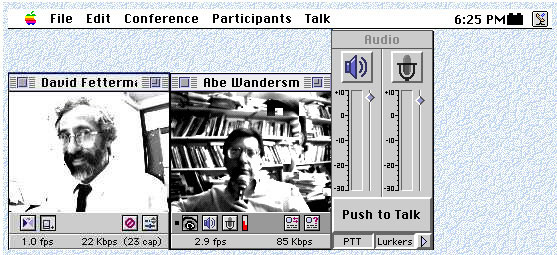

Videoconferencing

on the Internet involves two or more people seeing and talking to each

other through their computer screens or small group conferences in

which people converse at Internet reflector sites (places on the

Internet where people congregate and talk to each other). The software

is free or inexpensive, and there are no long-distance charges at

present over the Internet. Videoconferencing software, such as CU-SeeMe

or iVisit, enables people in remote settings to speak to each other

audibly or by typing instantaneous messages and to see each other (with

some minor delays). Abe Wandersman, from the University of South

Carolina, and David Fetterman, from Stanford University, use

videoconferencing as a tool to discuss problems and major issues

emerging from empowerment evaluation practice. (See http://www.stanford.edu/~davidf/videoconference.html for more information about this tool, and see Fetterman, 1996b; 1998c.)

This

is a computer screen snapshot of David Fetterman from Stanford

University, and Abraham Wandersman, from the University of South

Carolina, videoconferencing about plans for the next empowerment

evaluation collection.

Publishing

Internet

publishing represents an alternative to printed publications. Some

estimates put the number of online scholarly journals at over 500 (http://ejournals.cic.net). Refereed on-line journals, such as Education Policy Analysis Archives (http://olam.ed.asu.edu/epaa/),

represent an emerging and exciting vehicle for sharing educational

research and evaluation insights and findings in real time. Articles

can be reviewed by a larger number of colleagues in a much shorter

period of time using e-mail. In addition, articles can be published

electronically much more quickly than in traditional media. Colleagues

can critique these electronically published pieces more rapidly, which

allows authors to revise their work in less time than it would take to

publish an original document traditionally. Moreover, the cost of

electronic publication allows journals to be accessed without cost to

readers. This medium also allows authors to publish their raw data,

including their interviews, linked to the same "page." This allows the

reader to analyze the data immediately to sort those data according to

their own theoretical orientation. (See an illustration at http://olam.ed.asu.edu/epaa/v5n1.html.)

Some

colleagues and publishers are concerned about copyright issues.

However, norms are developing in this area, and publishing conventions

are being successfully applied to this medium. (Burbules & Bruce,

1995). I have published articles on the Internet and our book,Empowerment Evaluation: Knowledge and Tools for Self-Assessment and Accountability

(1996), was distributed both in traditional print format and over the

Internet. We have not experienced any abuse of privilege in this area.

We have, however, experienced a rapid and exponentially expanded

distribution of ideas. (See Fetterman, 1998a, about "learning with and

about technology in Meridian, a Middle School computer technology

oriented on-line journal, at http://www2.ncsu.edu/unity/lockers/project/meridian/index.html. See also Fetterman, 1998b, for another online article about teaching in the virtual classroom.)

|

Conclusion

Empowerment

evaluation has captured the attention and imagination of many

evaluators throughout the world. Although characterized as a movement

by some evaluators (Scriven, 1998; Sechrest, 1997) because of its

worldwide acceptance and the enthusiasm displayed by program

participants, staff members, and empowerment evaluation coaches engaged

in the process of improving programs and building capacity, empowerment

evaluation is neither a movement nor a panacea. It is an approach that

requires systematic and continual critical reflection and feedback.

Advocacy, a potentially controversial feature of the approach, is

warranted only if the data merit it.

Empowerment evaluation

is fundamentally a democratic process. The entire group--not a single

individual, not the external evaluator or an internal manager--is

responsible for conducting the evaluation. The group thus can serve as

a check on its own members, moderating the various biases and agendas

of individual members. The evaluator is a coequal in this endeavor, not

a superior and not a servant; as a critical friend, the evaluator can

question shared biases or "group think."

As is the case in

traditional evaluation, everyone is accountable in one fashion or

another and thus has an interest or agenda to protect. A school

district may have a five-year plan designed by the superintendent; a

graduate school may have to satisfy requirements of an accreditation

association; an outside evaluator may have an important but demanding

sponsor pushing either timelines or results, or may be influenced by

training to use one theoretical approach rather than another.

Empowerment evaluations, like all other evaluations, exist within a

context. However, the range of intermediate objectives linking what

most people do in their daily routine and macro goals is almost

infinite. People often feel empowered and self-determined when they can

select meaningful intermediate objectives that are linked to larger,

global goals.

Despite its focus on self-determination and

collaboration, empowerment evaluation and traditional external

evaluation are not mutually exclusive--to the contrary, they enhance

each other. In fact, the empowerment evaluation process produces a rich

data source that enables a more complete external examination. In the

empowerment evaluation design developed in response to the school's

accreditation self-study requirement presented in this discussion, a

series of external evaluations were planned to build on and enhance

self-evaluation efforts. A series of external teams were invited to

review specific programs. They determined the evaluation agenda in

conjunction with department faculty, staff, and students. However, they

operated as critical friends providing a strategic consultation rather

than a compliance or traditional accountability review. Participants

agreed on the value of an external perspective to add insights into

program operation, serve as an additional quality control, sharpen

inquiry, and improve program practice. External evaluators can also

help determine the merit and worth of various activities. An external

evaluation is not a requirement of empowerment evaluation, but it is

certainly not mutually exclusive. Greater coordination between the

needs of the internal and external forms of evaluation can provide a

reality check concerning external needs and expectations for insiders,

and a rich data base for external evaluators.

The external

evaluator's role and productivity is also enhanced by the presence of

an empowerment or internal evaluation process. Most evaluators operate

significantly below their capacity in an evaluation because the program

lacks even rudimentary evaluation mechanisms and processes. The

external evaluator routinely devotes time to the development and

maintenance of elementary evaluation systems. Programs that already

have a basic self-evaluation process in place enable external

evaluators to begin operating at a much more sophisticated level.

Empowerment

evaluation has enjoyed rapid widespread acceptance and use. The

Internet's capacity to disseminate information about empowerment

evaluation in nanoseconds and on a global scale contributed

substantially to this rapid dissemination of ideas. The synergistic

relationship between empowerment evaluation and the Internet offers a

model for others as they develop and refine their own approaches to

evaluation. The Internet is unrivaled as a mechanism for the

distribution of new ideas (particularly given the limited expense

associated with the endeavor). The worldwide response to EE illustrates

the strength and value arising from the marriages of new ideas and new

technology.

|

Author

David M. Fetterman

is a member of the faculty and the Director of the MA Policy Analysis

and Evaluation Program in the School of Education at Stanford

University. He was formerly Professor and Research Director at the

California Institute of Integral Studies; Principal Research Scientist

at the American Institutes for Research; and a Senior Associate and

Project Director at RMC Research Corporation. He received his Ph.D.

from Stanford University in educational and medical anthropology. He

has conducted fieldwork in both Israel (including living on a kibbutz)

and the United States (primarily in inner cities across the country).

David works in the fields of educational evaluation, ethnography,

policy analysis, and focuses on programs for dropouts and gifted and

talented education. He can be reached by e-mail at davidf@leland.stanford.edu.

|

References

Bandura, A. (1982). Self-efficacy mechanism in human agency. American Psychologist, 37, 122-147.

Brown, J. (1997). Book review of empowerment evaluation: Knowledge and tools for self-assessment and accountability. Health Education & Behavior, 24(3),388-391.

Burbules, N. C., & Bruce, B. C. (1995). This is not a paper. Educational Researcher, 24(8),12-18.

Campbell,

D. T. (1971). Methods for the experimenting society. Paper presented at

the annual meeting of the American Psychological Association,

Washington, D.C.

Choudhary, A., & Tandon, R. (1988). Participatory evaluation. New Delhi, India: Society for Participatory Research in Asia.

Committee on Institutional Cooperation (1997). The Committee on Institutional Cooperation electronic journals collection [on-line]. Available at http://ejournals.cic.net/.

Dunst, C. J., Trivette, C. M., & LaPointe, N. (1992). Toward clarification of the meaning and key elements of empowerment. Family Science Review, 5(1-2), 111-130.

Glass, G. V. (Ed.). (1993). Education Policy Analysis Archives [on-line]. Available at http://olam.ed.asu.edu/epaa/.

Glass, S. R. (1997). Markets and myths: Autonomy in public and private schools. Education Policy Analysis Archives, 5(1) [on-line]. Available at http://olam.ed.asu.edu/epaa/v5n1.html.

Fetterman, D. M. (1993). Speaking the language of power: Communication, collaboration, and advocacy (Translating Ethnography into Action). London, England: Falmer Press.

Fetterman, D. M. (1994). Empowerment evaluation (presidential address). Evaluation Practice, 15(1),1-15.

Fetterman,

D. M. (1995b). In response to Dr. Daniel Stufflebeam's: Empowerment

evaluation, objectivist evaluation, and evaluation standards: Where the

future of evaluation should not go and where it needs to go. Evaluation Practice, 16(2),179-199.

Fetterman, D. M. (1996a). Ethnography in the virtual classroom. Practicing Anthropology, 18(3), 36-39.

Fetterman, D. M. (1996b). Videoconferencing on-line: Enhancing communication over the Internet. Educational Researcher, 25(4), 23-27.

Fetterman, D. M. (1997). Videoconferencing over the Internet [on-line]. Available at http://www.stanford.edu/~davidf/videoconference.html.

Fetterman, D. M. (1998a). Learning with and about technology: A middle school nature area. Meridian, 1(1) [on-line]. Available at http://www2.ncsu.edu/unity/lockers/project/meridian/index.html.

Fetterman, D. M. (1998b). Teaching in the virtual classroom at Stanford University. The Technology Source [on-line]. Available at http://horizon.unc.edu/TS/cases/1998-08.asp.

Fetterman, D. M. (1998c). Webs of meaning: Computer and Internet resources for educational research and instruction. Educational Researcher, 27(3),22-30.

Fetterman,

D. M., & Haertel, E. H. (1990). A school-based evaluation model for

accelerating the education of students at-risk. (Clearinghouse on Urban

Education. (ERIC Document Reproduction Service No. ED 313 495)

Fetterman, D. M, Kaftarian, S., & Wandersman, A. (1996). Empowerment evaluation: Knowledge and tools for self-assessment and accountability. Thousand Oaks, CA: SAGE.

Greene, J. C. (1997). Evaluation as advocacy. Evaluation Practice, 18(1), 25-35.

Joint Committee on Standards for Educational Evaluation. (1994). The program evaluation standards. Thousand Oaks, CA: Sage.

Mithaug, D. E. (1991). Self-determined kids: Raising satisfied and successful children. New York: Macmillan (Lexington imprint).

Mithaug, D. E. (1993). Self-regulation theory: How optimal adjustment maximizes gain. New York: Praeger.

Oja, S. N., & Smulyan, L. (1989). Collaborative action research. Philadelphia: Falmer.

Papineau,

D., & Kiely, M. C. (1994). Participatory evaluation: Empowering

stakeholders in a community economic development organization. Community Psychologist, 27(2), 56-57.

Parlett,

M., & Hamilton, D. (1976). Evaluation as illumination: A new

approach to the study of innovatory programmes. In D. Hamilton (Ed.), Beyond the numbers game. London: Macmillan.

Patton, M. (1997). Utilization-focused evaluation, new century edition. Thousand Oaks, CA: SAGE.

Patton, M. (1998). Toward distinguishing empowerment evaluation and placing It in a larger context. Evaluation Practice, 18(2),147-163.